The Unix philosophy as a design heuristic has taken on a life of its own outside of Unix and its descendents, and it seems to be taking root in the community of library-focused open source software. The repository software community in particular seem to be moving away from monolithic all-in-one solutions to modular systems where many different pieces of software all talk to each other and work together to achieve a common goal. Developers are taking successful non-library-focused open source software and co-opting it into their stack. This “don’t reinvent the wheel” approach increases the overall quality of the system AND reduces developer time spent since the chosen software already exists, works well and has its own development community. Consider the use of the Solr search server and the Fuseki triplestore used by the Fedora Commons community. If they had tried to implement their own built-in search and triplestore capabilities it would have required far more developer time to reimplement something that already exists. By using externally developed software like Solr, the Fedora community can rely on a mature project that has its own development trajectory and community of bug squashers.

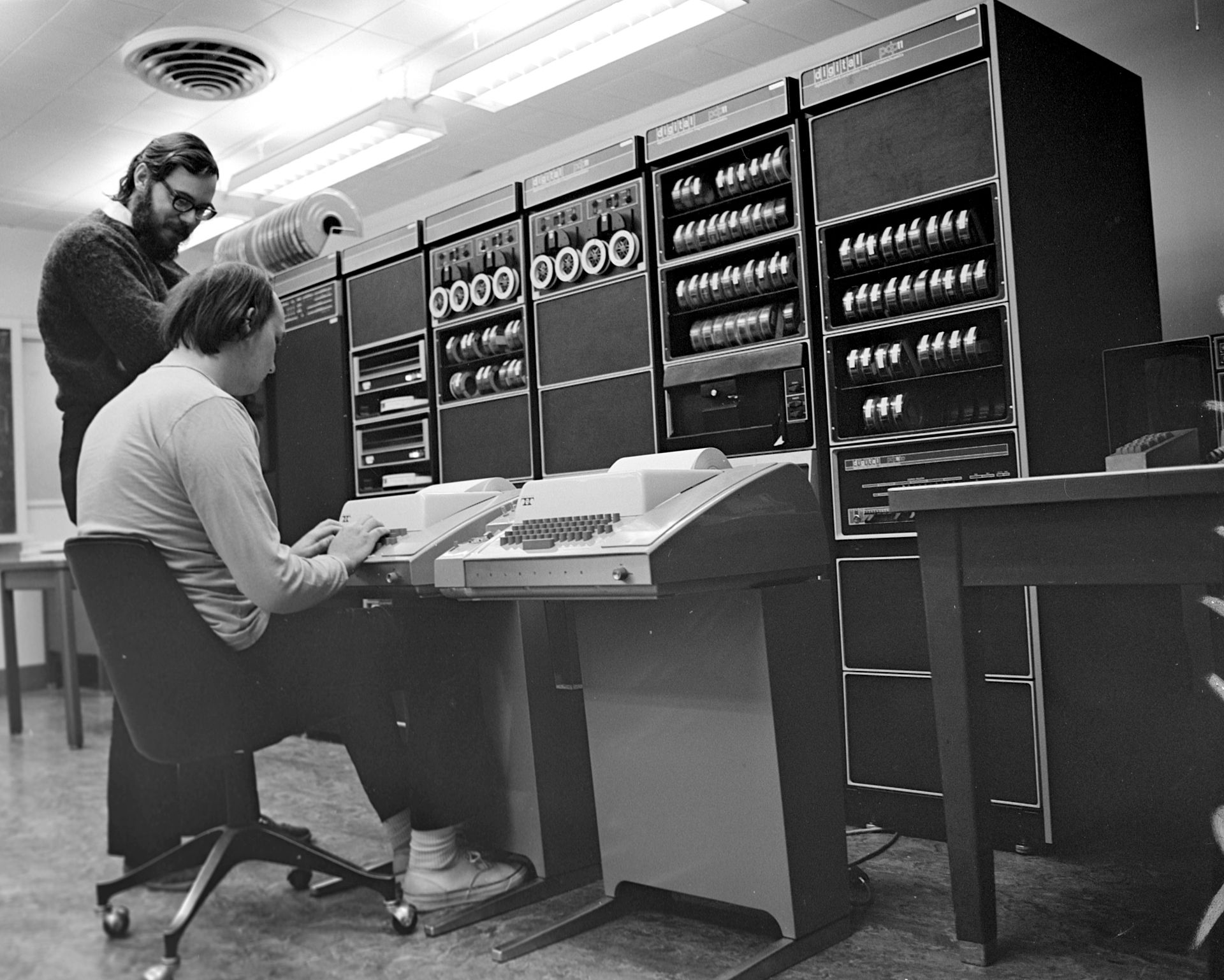

The Unix philosophy need not be tied just to software, either. I’ve found myself applying it in a managerial sense lately while working on certain projects by creating small, focused teams that do one thing well and pass the work amongst themselves (as opposed to everyone just doing whatever). Another way of looking at it is understanding and capitalizing on the strengths of your coworkers: I’m okay at managing projects but our project manager is much better, and our project manager is okay at coding but I’m much better. It doesn’t make much sense for our project manager to spend time learning to code when he has me, just like it doesn’t make sense for me to focus on project management when I have him (of course there are benefits to this, but in practice its a much smaller return on investment). We both serve the organization better by focusing on and developing our strengths, and leaning on others’ expertise for our weaknesses. This lets us work faster and increases the quality of our output, and in this day and age time and effort are as valuable and limited as the system memory and disk space of a 1970’s mainframe.